The German Research Foundation (Deutsche Forschungsgemeinschaft, DFG) has announced the formation of a new Collaborative Research Centre/Transregio (TRR) on “Explainability of artificial intelligence (AI)” at the universities of Paderborn and Bielefeld. Over the next four years, the foundation will provide about 14 million euros in support funding for this project. The highly interdisciplinary research program, titled “Constructing Explainability,” goes beyond the issue of explaining AI as the basis for algorithmic decision-making. The approach requires people to participate actively in socio-technical systems. The goal is to improve human-machine interaction, to focus on an understanding of algorithms, and to study this as the product of a multi-modal explanation process. The four-year funding period begins on July 1.

Today, artificial intelligence is an intrinsic part of modern life – it sorts through applications, evaluates X-ray images, and suggests new playlists. These processes are based on algorithmic decision-making. “Citizens have a right to transparent algorithmic decisions. The goal of making algorithms accessible is at the heart of eXplainable Artificial Intelligence (XAI), where the focus is on transparency, interpretability and explainability as the desired result,” says Prof. Katharina Rohlfing, spokesperson for the new Collaborative Research Centre. “The problem is that in our digital society, algorithmic approaches like machine learning are quickly becoming increasingly complex. This opacity is a serious problem in every context, but especially when people need to make decisions based on an opaque situation,” adds Prof. Philipp Cimiano, deputy spokesperson. Especially for predictions in the field of medicine or law, Cimiano continues, it is necessary to understand the machine-controlled decision process. While some approaches do focus on the explainability of corresponding systems, the resulting explanations require advance knowledge about how AI works. According to Cimiano and Rohlfing, what is missing are concepts for co-constructing explanations in which the addressees – in other words, the people – are more strongly integrated into the AI-controlled explanation process.

Rohlfing: “In our approach, we assume that explanations are clear to the users only if they are created not just for the users, but with them. In explanations between people, this is ensured by the interaction between the participants, who can ask questions and express confusion.” The interdisciplinary team allows for close collaboration between linguists, psychologists, media researchers, sociologists, economists and computer scientists, who research the principles and mechanisms of explanation and understanding as social practices and how these can be implemented in AI systems. In addition, the team studies how the co-construction of explanations in the interaction between human and machine establishes new social practices, as well as the societal impact of these practices.

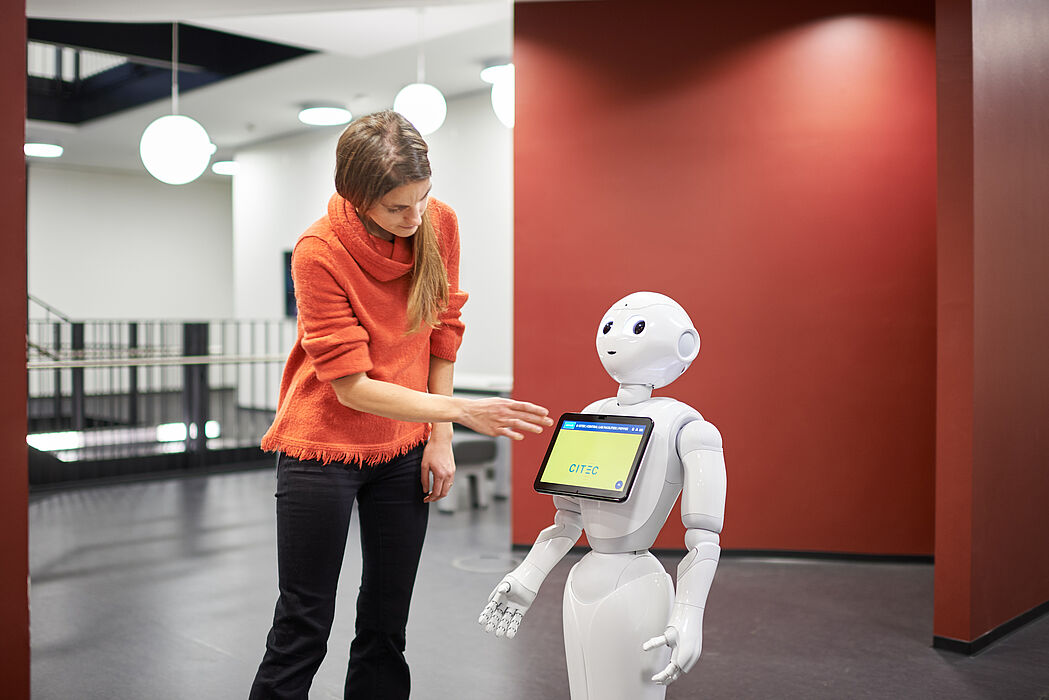

The approach is intended to provide new answers to societal challenges relating to artificial intelligence. Primarily, the idea is for people to participate in socio-technical systems that also promote users’ informational sovereignty. “Our goal is to create new forms of communication with truly explainable and comprehensible AI systems, thereby facilitating new forms of assistance,” sums up Rohlfing.

Nina Reckendorf, Department of "Press, Communications and Marketing"