Key research area “Digital Humanities”

In the key research area Digital Humanities, Paderborn's Computer Science and Humanities are breaking new ground together. Research in the field of humanities raises new challenges for computer science, e.g. in the research of non-standard data. Conversely, the digital processing and analysis of research objects leads cultural studies to a significant expansion and reflection of its canon of methods. A particular focus of cooperation is on the interdisciplinary development of technology and methods as well as on the development of data and coding standards for the cultural sciences - the latter in discourse with the relevant international standardisation initiatives and the corresponding committees, institutions and alliances.

How humanities and computer science can successfully conduct research in constant mutual exchange can be seen, for example, in the Zentrum Musik – Edition – Medien (ZenMEM), where edition approaches in musicology and media studies are further developed through new data formats, visualisation procedures and analysis methods. Moreover, in Paderborn, methods of machine learning expand the spectrum of data-driven possibilities for gaining new insights in the field of Digital Humanities. The variety of textual and hybrid data covered in Paderborn's cultural sciences (including graphic novels and graffiti) in combination with the broad spectrum of computer science research contributes to the development of a specific Digital Humanities research profile at Paderborn.

Research contact: Prof. Dr. Andreas Münzmay and Prof. Dr. Axel-Cyrille Ngonga Ngomo

Research areas involved: humanities and computer science

Research Activities

Beethoven in the House ist ein auf knapp drei Jahre angelegtes DH-Forschungsprojekt, das Forscher der Universität Paderborn, des Beethoven-Hauses Bonn und des e-Research Center der Universität Oxford zusammenbringt. Das Team wird sich mit Klavier- und Kammerensemble-Arrangements von Beethovens symphonischen Werken befassen, um zu ermitteln, welche Rolle diese Arrangements als damaliges “musikalisches Leitmedium” bei der Verbreitung von Konzertmusik im 19. Jahrhundert vor dem Aufkommen von Rundfunk und Aufnahmen spielten.

Integraler Bestandteil dieses Forschungsprozesses ist die Modellierung und Entwicklung von digitalen Werkzeugen, welche die Sichtung und Analyse des Materials erleichtern. Aus musikwissenschaftlicher Sicht geht es darum, den Besonderheiten dieser Arrangements nachzuspüren – mit welchen Mitteln sie die musikalischen Eigenheiten und Klangfarben der Orchesterwerke für andere Klangkörper übersetzen, und auch welche (gesellschaftliche) Funktion ihnen beigemessen wurde. Aus der Perspektive der Digital Humanities neu ist dabei die hierfür nötige Kombination von Digitaler Musikedition und Linked Open Data – aufgrund der Fülle der Materialien können diese nur partiell vollständig erschlossen werden, müssen aber dennoch ausreichend Ankerpunkte für die geplante inhaltliche Erschließung und Kommentierung bieten.

Gerade diese Offenheit gegenüber heterogen erschlossenen Daten stellt einen wesentlichen Schritt hin zu einer größeren Praxistauglichkeit digitaler Methoden dar, der sich am Beispiel hausmusikalischer Bearbeitungen als absolutem Massenphänomen des 19. Jahrhunderts ideal erproben lässt und dort auch inhaltlich neue Erkenntnisse verspricht.

Weitere Informationen: https://www.muwi-detmold-paderborn.de/forschung/beethoven-in-the-house

The research project Beethoven's Workshop, funded since 2014 by the german Academy of Sciences and Literature, is dedicated to Beethoven's compositional working processes. This project in the field of music philology is based on two closely related research areas: Parallel to the genetic text critique methods which are being developed, digital techniques and forms for an adequate presentation of research results will be developed. The methods and possibilities for visualization will also be transferable to other composers. For the research work, the coding format MEI (Music Encoding Initiative) will be used and expanded.

The music-related genetic text critique that is being developed takes up both working methods of musical sketch research and methods of the French, literary-oriented Critique génétique. This project investigates the compositional thinking and working processes, and tries to reconstruct the creation of a composition by using available workshop documents.

Further Information: http://beethovens-werkstatt.de/

Der Kultur- und Bildungsbegriff hat sich im digitalen Zeitalter ebenso gewandelt wie die zur Auswahl stehenden Formate der Kultur- und Bildungsvermittlung. Zugleich hat die Auswärtige Kultur- und Bildungspolitik (AKBP) als dritte Säule der Außenpolitik der Bundesregierung vor diesem Hintergrund an Bedeutung und Dynamik gewonnen. In diesem Feld eine neue Kultur-, Forschungs- und Bildungsplattform zu einem in Hinblick auf Sprach- und Verfassungsarchitektur wohl besonders komplexen Land der EU aufbauen zu wollen, ist ein komplexes Unterfangen, das gleichermaßen Fragen nach Aufgaben, Zielen, Technologien, Strukturen und Inhalten aufwirft. Die 2019 ins Leben gerufene Plattform Belgien.Net hat sich dieser Aufgabe gestellt. Ziel ist es, über das für Deutschland, und insbesondere für Nordrhein-Westfalen wichtige Nachbarland Belgien sachkundig und anschaulich zu informieren, neue Inhalte in neuen Formaten zu generieren und den Austausch mit der internationalen Wissenschaft zu moderieren sowie neue digitale didaktische und kulturelle Formate zu erschließen. Im Austausch mit der IT-Firma code-x und dem IMT wurden prozessorientierte Überlegungen zu der Etablierung einer derartigen Plattform diskutiert, erprobt und entwickelt.

Seit 2019 Drittmittelprojekt mit der Staatskanzlei des Landes Nordrhein-Westfalen, der belgischen Botschaft in Berlin und anderen Partnern im In- und Ausland.

Weitere Informationen: https://belgien.net/

The centre, which is funded by the North Rhine-Westphalian Ministry of Culture and Science, is dedicated to research on the history of women in science and philosophy. The knowledge acquired here is intended to have an even stronger impact on future teacher training. They are important multipliers who make it clear that there are not only great male philosophers and their doctrines. The knowledge gained will be compiled in a digital encyclopedia. In addition to research on the history of women scientists, the Centre is also dedicated to research on the writings of these women from the 18th century as well as on early phenomenologists. Together with partners in Australia, Israel, Canada, the USA, and the European network with countries such as Finland, France, Iceland, Italy, Croatia, Spain, Turkey and Hungary, the aim is to strengthen the network of researchers in this field.

Further Information: https://historyofwomenphilosophers.org/

In our digitized society, algorithmic approaches (such as machine learning) are rapidly increasing in complexity, making it difficult for citizens to understand their assistance and accept the decisions they suggest. In response to this societal challenge, research has started to push forward the idea that algorithms should be explainable or even able to explain their own output. This has led to vivid developments of systems providing explanations in an intelligent way (explainable AI or XAI). In our interdisciplinary initiative “Constructing Explainability”, joining forces from the Universities of Paderborn (UPB) and Bielefeld (UBI) and across different disciplines (Linguistics, Psychology, Media Studies, Sociology, Economics and Computer Science), we discuss critically existing approaches and develop a new and co-constructing view on explanations. Our approach promotes humans’ active and mindful participation in sociotechnical settings with AI technologies, thus increasing their informational sovereignty. Our goal is to extend current research in (computer) science to include new perspectives from other disciplines and to offer new answers to the aforementioned societal challenge.

URL of this publication: https://ieeexplore.ieee.org/document/9292993

Members of this initiative: Heike M. Buhl (UPB), Hendrik Buschmeier (UBI), Philipp Cimiano (UBI), Elena Esposito (UBI), Angela Grimminger (UPB), Reinhold Häb-Umbach (UPB), Barbara Hammer (UBI), Ilona Horwath (UPB), Eyke Hüllermeier (UPB), Friederike Kern (UBI), Stefan Kopp (UBI), Tobias Matzner (UPB), Axel-Cyrille Ngonga Ngomo (UPB), Katharina J. Rohlfing (UPB), Ingrid Scharlau (UPB), Carsten Schulte (UPB), Kirsten Thommes (UPB), Anna-Lisa Vollmer (UBI), Henning Wachsmuth (UPB), Petra Wagner (UBI), Britta Wrede (UBI)

Contact persons: Katharina J. Rohlfing, katharina.rohlfing@upb.de (UPB) and Philipp Cimiano, cimiano@cit-ec.uni-bielefeld.de (UBI)

In unserer zunehmend datengetriebenen Welt wird der selbstbestimmte, sichere Umgang mit Daten zu einer Schlüsselkompetenz - nicht nur in der Wissenschaft und auf dem Arbeitsmarkt, sondern auch für die Entwicklung unserer Gesellschaft. Wie wichtig Datenkompetenzen für jede*n von uns sind, macht die Corona-Pandemie zurzeit erschreckend deutlich. Daher ist es das Ziel des hochschulübergreifenden Verbundprojekts DataLiteracySkills@OWL der Universitäten Bielefeld und Paderborn, sowie der Fachhochschule Bielefeld, Datenkompetenzen ─ Data Literacy Skills ─ zu stärken.

Data Literacy ist hierbei die Fähigkeit, planvoll und reflektiert mit Daten umzugehen und sie im jeweiligen Kontext bewusst einsetzen und hinterfragen zu können: Wo findet man geeignete Daten? Wie erhebt man Daten und wertet sie aus? Welche Daten darf man eigentlich wie verwenden? Die Sammlung und Verwaltung bis hin zur kritischen Bewertung und Anwendung von Daten erfordert grundlegende Kompetenzen. Studierende benötigen diese, um in Zukunft in allen Sektoren und Disziplinen wissenschaftlich und gesellschaftlich handlungsfähig zu sein und die digitale Transformation zu gestalten.

Weitere Informationen: https://www.campus-owl.eu/projekte/dalis

The aim of the project Diccionario del Español Medieval electrónico (DEMel) is to digitize the comprehensive lexicographic data archive of paper slips of the Diccionario del Español Medieval (DEM). In this way, DEMel will provide free online access to this valuable archive of medieval Spanish comprising approximately 31,000 lemmata with about 700,000 dictionary slips including one or more attestations.

Access to DEMel: https://demel.uni-rostock.de

Further Information:

http://kw.uni-paderborn.de/institut-fuer-romanistik/demel/

The DFG project, which has been running since September 2014, is for the first time dealing in detail with the rich sources preserved from the heyday of the Detmold Court Theatre and is developing an XML-based model for the deep exploration of comparable stocks.

The source descriptions are based on the RISM data records available since the 1980s, which thanks to RISM's Linked Open Data Initiative can be converted into the data format of the Music Encoding Initiative (MEI) and subsequently enriched, e.g. by incipits of the music numbers, collection of personal data, as well as strokes and inlays contained in the materials.

All documents are recorded in MEI or TEI either completely or as regests, so that the interaction of both standards can be intensively tested and, above all, MEI can be further developed with regard to its applicability in music library applications. Facsimiles are also integrated exemplarily with the help of the Edirom tools. The project conceives a model that can be used in the long term for similar projects.

Further information: https://hoftheater-detmold.de

Das Projekt (DFG LIS) ist ein Gemeinschaftsprojekt der SLUB Dresden und des ZenMEM der Universität Paderborn und ist für zwei Jahre (2021–2022) bewilligt.

Im Rahmen des Projektes wird der DFG-Viewer für die Darstellung von musikalischen Quellen um folgende Möglichkeiten erweitert:

- Erweiterung der Meta- und Strukturdaten um wissenschaftlich bedeutsame Metadaten wie weitere Personengruppen (Bearbeiter, Schreiber, Interpret etc.) und Ereignisse.

- Auf Grund ihrer großen Bedeutung für die Gliederung von Musik sollen Taktpositionen angezeigt werden. Darüber hinaus erfolgt die Anzeige von Annotationen zum Quellenmaterial.

- Volltexte (MEI, Music XML) der musikalischen Quellen sollen parallel zum Faksimile angezeigt werden.

- In Beziehung stehende Quellen (Stimmmaterialien, handschriftliche und gedruckte Materialien eines Werkes) sollen in paralleler Ansicht angezeigt und synchron in ihrer zeitlichen Dimension durchlaufen werden können. Auch eine Parallelisierung mit Audio- oder Videoaufnahmen ist geplant.

Alle diese Anpassungen und Erweiterungen für musikalische Quellen dienen nicht nur der besseren und benutzerfreundlicheren Präsentation der Digitalisate, sondern auch der engeren Verbindung von Wissenschaft und Bibliothek: Die Bibliothek präsentiert nicht nur ihre Bestände, sondern auch die dazu bereits gewonnenen wissenschaftlichen Erkenntnisse. Gleichzeitig ermöglicht die Bibliothek mit der Integration der wissenschaftlichen Daten deren Langzeitarchivierung.

Weitere Informationen: https://www.muwi-detmold-paderborn.de/forschung/dfg-viewer-fuer-musikalische-quellen

The Dialektatlas Mittleres Westdeutschland (DMW) is a 17-year project in which the systematic collection, evaluation and interpretation of dialects, and the most distant speech styles of two generations of speakers in central West Germany (North Rhine-Westphalia and parts of Lower Saxony and Rhineland-Palatinate) is carried out on a phonetic-phonological, morphological, syntactic, and lexical level.

The DMW atlas is digital: dialectal language is collected, processed and stored in a complex structured database. It is dynamic, since dialectal information is presented to the users via web browser in form of maps, which are generated on request by means of a database query. It also speaks, since the digital recordings of dialect speech can be called up by a simple mouse click on the map.

Further Information: http://www.dmw-projekt.de/

Das Projektes „EcoGest“ entwickelt ein computergestütztes Modell, welches in Form eines virtuellen Kindes das sprachliche und gestische Verhalten von vier - und fünfjährigen Kindern nachbildet. Für die Produktion der Gestik wird ein visuelles Arbeitsgedächtnis und für die Produktion der Sprache ein konzeptionelles Arbeitsgedächtnis in das Modell implementiert. Als Grundlage für die Entwicklung des Modells dient die quantitativ und qualitativ Analyse des kommunikativen Verhaltens von ca. 40 Probanden in verschiedenen Situationen. Des Weiteren werden mittels eines Intelligenztests die räumlichen Denkfähigkeiten sowie die fluide Intelligenz der Probanden erfasst.

Weitere Informationen: https://scs.techfak.uni-bielefeld.de/ecogest/

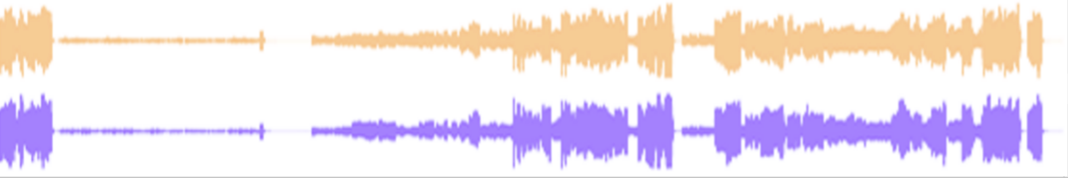

Das im Januar 2021 angelaufene und von Prof. Dr. Rebecca Grotjahn initiierte Projekt ist durch den Forschungspreis der Universität Paderborn gefördert. Ziel ist es sowohl auf methodischer als auch auf praktischer Ebene die Edition phonographischer Musik erstmals zu realisieren.

Damit dies gelingt, formuliert das Projektteam eine Philologie Phonographischer Musik. Sie soll ein Verständnis von Schrift und Text ermöglichen, das auch phonographische Schrift als primäre Quelle berücksichtigt und zu behandeln weiß. Für die Editionspraxis wird ein Tool entwickelt, mittels dem eine Tonaufnahme in visuelle Daten umgewandelt und anhand von Synchronisationspunkten mit Annotationen versehen werden kann. Diese Daten bilden den Metatext, welcher für die breite Nutzerschaft verfügbar gemacht wird. Das Tool erkennt hineingeladene Audiodateien automatisch und ruft – sofern bereits vorhanden – zugehörigen Metatext auf.

Das Editionstool bietet damit die Möglichkeit sich quellenkritisch mit phonographischer Musik auseinander zu setzen, ohne dass die Audio-Objekte selbst ediert werden. Die Philologie phonographischer Musik verspricht damit auch einen erheblichen Erkenntnisgewinn für die Erforschung populärer Musik.

Weitere Informationen: https://www.muwi-detmold-paderborn.de/forschung/edition-phonographischer-musik

Despite several calls to correct the research desiderata in the historic linguistic approaches, key documents of the German resistance between 1933 and 1945 have previously been the subject of only selective linguistic studies on National Socialism, at best. The project intends to take systematic account of the heterogeneity of resistance communication in particular, explained firstly by the links to differing social and/or political milieus, secondly by the actors’ differing positions, and thirdly by differing conflicts with and approaches to the National Socialist regime and the increasingly integrated society. In methodological respects, the research project essentially combines linguistic hermeneutics with computerbased methods of corpus linguistics.

The project aims to utilize a representative full-text digitalized corpus to investigate comprehensively how language is used to exercise resistance, which language use patterns can be established, and which functions these patterns perform. Existing approaches to action-oriented discourse analysis form the basis of a discrete multidimensional model are applied to analyse these resistance texts. Extrapolating patterns of action from the textual surface and ascertaining changes in these patterns the tagset refers to the four levels of actions constituting reality, identity and relationships, and actions of contradiction, disproving and resistance. In the exploration of the annotated data concordance lines and cluster analysis are employed. To investigate serialisations and correlations between annotated language units that are lying on different levels innovative ways of data analysis will be tested using the output files of CATMA (markups). The complexity of the communicative practices like inclusion and exclusion, requesting and accusing, will be shown not only separately level by level but also in its typical nexus.

Further Information: https://www.uni-paderborn.de/en/research-projects/heterogene-widerstandskulturen/

Der Beginn von "Henze-Digital" (2021) stellt den Anfang einer langfristigen Beschäftigung mit dem Leben, Werk und Wirken des Komponisten Hans Werner Henze (1926-2012) am Standort Detmold/Paderborn dar. Die Erforschung und Edition der Materialien (Medien des 21. Jahrhunderts) erfolgt unter Anwendung und Weiterentwicklung digitalphilologischer Methoden zur digitalen Erfassung und FAIRen Publikation der Forschungsergebnisse.

In der ersten Arbeitsphase ("Digitale Briefedition: Hans Werner Henzes künstlerisches Netzwerk", DFG, 2021–2024) widmet sich das Projekt inhaltlich dem Briefwechsel mit Librettisten (u. a. Wystan Hugh Auden/Chester Kallman, Hans Magnus Enzensberger, Grete Weil/Walter Jokisch) und mit Henzes Mäzen und Auftraggeber Paul Sacher. Während im brieflichen Austausch mit Henzes Librettisten vor allem künstlerische und ästhetische Debatten, etwa über die Gewichtung von Musik und Sprache oder politische Kontroversen zu den gewählten Sujets ausgetragen werden, legt der umfangreiche Briefwechsel mit Paul Sacher, dem Auftraggeber und Leiter von Uraufführungen sowie Widmungsträger bedeutender Werke Henzes, über entstehende Werke inhaltlich Rechenschaft ab.

Weitere Informationen: https://www.muwi-detmold-paderborn.de/forschung/henze-digital-hans-werner-henzes-kuenstlerisches-netzwerk

The focus of the HiP App is to discover to what extent new technical possibilities make the traces of Paderborn's history tangible for the user. It will therefore be explored how images, text and space can be interactively designed on the user interface. The faculties of German linguistics and medieval studies, art history, computer science and history will work together to create an innovative back-end and a user-friendly, interactive front-end. The app content will be compiled by students of the Paderborn University who participate in specific seminars and recorded in multisensory portfolios. The front-end of the app is designed on this basis. Through augmented reality the curiosity of the users will be awakened and the further dealing with the topic of the city's history will be evoked.

Further Information: https://www.sicp.de/en/projekte/hip-app/

Combining methods drawn from the cognitive sciences and digital humanities with narratology and literary history, the research group aims at a richer and empirically robust understanding of graphic literature and, in particular, the genre of the graphic novel. The group brings together scholars from psychology, computer science, as well as literary and cultural studies to contribute to the establishment of empirical methods in the humanities.

Further Information: https://groups.uni-paderborn.de/graphic-literature/wp/

The goal of the iART project is to develop an open Web platform, which is able to index large amounts of image data from art history and related disciplines and make them searchable. Specific methods will be designed for content-based search that take into account color, texture, and shape as well as the semantic content of the image. In order to achieve this goal, a generic lexicon of visual concepts will be created together with the project partners and the technical feasibility of the concepts will be evaluated with regard to the various object types. In addition, the system will include functions to cluster and structure large sets of images based on various definitions of similarity.

Further Information: https://projects.tib.eu/en/iart/about/

Graffiti is an urban phenomenon that is increasingly attracting the interest of researchers. Until now, there has been a lack of suitable data corpora for systematic research. The Graffiti Information System (INGRID) closes this gap: stocks of graffiti images, which were provided exclusively for scientific use within the project, are compiled in a database and made accessible to scientific researchers. At the same time, scientific standards for the digital recording and systematisation of graffiti will be developed.

INGRID currently contains more than 150,000 images from the period 1983 to 2015, which will be digitized and indexed in the coming years. For the first time, INGRID will allow researchers to examine developments and changes in graffiti over a longer period of time on the basis of comprehensive, reliable and high-quality research data, and to investigate the aesthetics of graffiti images, their specific textual (pictorial) nature, their grammaticality, their urban location as well as their social functions and meanings.

Further Information:

https://www.uni-paderborn.de/forschungsprojekte/ingrid/

This empirical research project investigates the language elaboration of Middle Low German from the 13th century to the written language shift in the 16th/17th century. At this time, Middle Low German lost its dominant position as a supraregional written language to Early New High German.

An interactive procedure is being developed that combines machine learning and expert feedback to solve one of the most central problems of existing annotation tools for historical texts. Existing parsing and tagging systems require static grammars and grammatical categories but these are of no use due to the historical dynamics of grammar. It is intended to discover an evolving, dynamic grammar by using rule-based text analysis techniques and machine learning methods. This enables the researchers to reconstruct the language elaboration in an evidence-based way, which is a novelty. This requires knowledge about historical language and grammar as well as knowledge about computational linguistics and computer science. Therefore, this project is an interdisciplinary one that requires a close cooperation over the whole funding period.

Further Information:

http://www.uni-paderborn.de/forschungsprojekte/intergramm/

Die Studie ICILS (International Computer and Information Literacy Study) ist eine international vergleichende Schulleistungsstudie, die von der IEA (International Association for the Evaluation of Educational Achievement) koordiniert wird. Mit ICILS werden seit 2013 die computer- und informationsbezogenen Kompetenzen von Achtklässlerinnen und Achtklässlern untersucht. Darüber hinaus werden die Rahmenbedingungen des Erwerbs dieser Kompetenzen auf verschiedenen Ebenen (Schulsystem, Schule, Unterricht und Individualebene) und umfangreiche Informationen zum schulischen Lernen und Lehren mit digitalen Medien erfasst. Seit dem zweiten Studienzyklus (ICILS 2018) werden im Rahmen eines Zusatzmoduls zudem die Kompetenzen im Bereich Computational Thinking untersucht. Derzeit wird der dritte Zyklus der Studie (ICILS 2023), der in Deutschland erneut durch das Bundesministerium für Bildung und Forschung (BMBF) gefördert und durch die Europäische Kommission kofinanziert wird, vorbereitet. Das nationale Forschungszentrum der Studie liegt an der Universität Paderborn unter der wissenschaftlichen Leitung von Prof. Dr. Birgit Eickelmann. Mehr Informationen sowie die Ergebnisse der bisherigen ICILS-Studien finden sich unter upb.de/icils2018.

Das Projekt „KoLidi – Kollaborative Literaturgeschichte digital und interaktiv“ ist ein vom Ministerium für Kultur und Wissenschaft des Landes NRW im Rahmen der landesweiten Digitalisierungsoffensive „OERContent.nrw“ gefördertes Konsortialprojekt unter Beteiligung der Universitäten Paderborn, Bielefeld und Wuppertal. An den drei Standorten entsteht ein digitaler Kurs zum kollaborativen und interaktiven Selbststudium der deutschen Literaturgeschichte vom Mittelalter bis zur Gegenwart. Der gemeinsam entwickelte Kurs wird über die Plattform der Digitalen Hochschule NRW auch anderen Universitäten (national und international) zur Verfügung gestellt.

Der Kurs richtet sich an Studierende der Germanistik in den Lehramts- und den fachwissenschaftlichen Studiengängen gleichermaßen. Um Literaturgeschichte erfahrbar werden zu lassen, werden Textpakete digital zusammengestellt und auf dieser Grundlage ein Multimediakurs auf Moodle-Basis unter Verwendung der Software H5P entwickelt. Ermöglicht wird dabei die Organisation von Studierenden in Kleingruppen oder die Verwendung der Lernmaterialien im Selbststudium.

Das Lernmaterial selbst wird für die Universitäten so zusammengestellt, dass es erweitert, kombiniert oder reduziert werden kann. Grundsätzlich soll es möglich sein, den Onlinekurs in Teilen oder als Ganzes in einen Studiengang zu integrieren.

Weitere Informationen: https://kw.uni-paderborn.de/institut-fuer-germanistik-und-vergleichende-literaturwissenschaft/forschung/kolidi

Das Kompetenzzentrum versteht sich sowohl als Dienstleistungsinstanz im Bereich der Lehre als auch als Koordinationseinrichtung für Forschungsvorhaben im Bereich des Materiellen und Immateriellen Kulturerbes. Seit Oktober 2006 wurde unter der Leitung des Kompetenzzentrums für Materielles und Immaterielles Kulturerbe UNESCO das Paderborner Bildarchiv eingerichtet und aufgebaut sowie verschiedene Projekte zur Erforschung des kulturellen Erbes unterstützt.

Im Jahr 2013 wurde das Kompetenzzentrum umbenannt in Kompetenzzentrum Kulturerbe: materiell – immateriell – digital um den aktuellen Forschungsschwerpunkten gerecht zu werden. Die Forschungsprojekte, die das Kompetenzzentrum vereint, widmen sich sowohl dem materiellen als auch dem immateriellen Kulturerbe und dem Bereich der e-Humanities.

Weitere Informationen:

https://kw.uni-paderborn.de/historisches-institut/materielles-und-immaterielles-kulturerbe/kompetenzzentrum

Das von der Landesregierung Nordrhein-Westfalen geförderte Projekt untersucht im Rahmen der Förderlinie „Digitale Gesellschaft“ aus einer medienpädagogischen und psycholinguistischen Perspektive, wie und ob die Sprachentwicklung von Vorschulkindern im Rahmen von langfristigen Interaktionen mit einem sozialen Roboter profitieren kann. Dabei befasst sich das Projekt mit Aspekten, wie etwa der Entwicklung einer kindorientierten Kind-Roboter-Dialogführung, der Untersuchung langfristiger Lerneffekte oder der Erkundung der Sichtweisen von Erzieherinnen und Erziehern im Hinblick auf einen Einsatz der Technologie.

Weitere Informationen: http://graduiertenkolleg-digitale-gesellschaft.nrw/merits-fruehkindlicher-medienumgang-und-sprachlernen-mit-sozialen-robotern-zur-foerderung-von-teilhabechancen-in-der-digitalen-gesellschaft/

NFDI4Culture consists of a geographically, thematically and institutionally balanced network of 9 co-applicants and 52 participants. It aims to ideally represent the broad spectrum of different actors in the cultural heritage domain. The co-applicants comprise four universities (Cologne, Heidelberg, Marburg, Paderborn), three infrastructure institutions (FIZ Karlsruhe, TIB Hannover, SLUB Dresden), Germany’s largest institution in the GLAM sector (Stiftung Preußischer Kulturbesitz) and the Academy of Sciences and Literature | Mainz. This group is joined by 11 academic societies each representing one of the research domains that together make up the community of interest.

Weitere Informationen: https://www.nfdi4culture.de/

Die Internetplattform "Nova Corbeia - Die virtuelle Bibliothek Corvey" – realisiert vom Lehrstuhl für Materielles und Immaterielles Kulturerbe der Universität Paderborn unter der Leitung von Professor Eva-Maria Seng und der Erzbischöflichen Akademischen Bibliothek Paderborn unter der Leitung von Dr. Hermann-Josef Schmalor – ermöglicht erstmals den virtuellen Zugang zu den Buchbeständen der ehemaligen Klosterbibliothek der Reichsabtei Corvey. Ziel des Projekts ist es, eine virtuelle Zusammenführung aller erhaltenen Buchbestände zu schaffen, die ursprünglich in der Bibliothek des Benediktinerklosters in Corvey versammelt waren und seit dessen Auflösung im frühen 19. Jahrhundert an verschiedene Institutionen überführt wurden. In der virtuellen Bibliothek Corvey können die mittelalterlichen Handschriften als Vollfaksimiles eingesehen und alte Drucke über eine Suchmaske recherchiert werden. Zudem bietet die Internetplattform umfangreiche Informationen zu verschiedenen Themen wie Geschichte, Architektur, Bibliotheken und Bestände, Pläne und Grundrisse rund um das Kloster und Schloss Corvey, ebenso wie aktuelle wissenschaftliche Veröffentlichungen. Die Internetplattform wird fortlaufend ausgebaut und um weitere digitalisierte Handschriften und Katalogeinträge ergänzt.

In der im Anschluss an das Projekt realisierten Wanderausstellung, die von 2011 bis 2013 an sechs Stationen zu sehen war, wurden die gewonnenen Erkenntnisse an eine breite Öffentlichkeit vermittelt. Damit die Inhalte der stattgefundenen Wanderausstellung auch nach Ausstellungsende für Forschung und Öffentlichkeit zugänglich sind, wurde die Ausstellung auch als virtuelle Exposition im Internet verwirklicht und durch multimediale Module erweitert. Die digitale Präsentation der Exponate im Netz greift auf eine Strukturierung in drei unterschiedliche Räume mit thematischen Stationen zurück, in denen sich der virtuelle Besucher per Mausklick intuitiv und frei bewegen kann.

Weitere Informationen: http://nova-corbeia.uni-paderborn.de

The interactive, multimodal OWL.Kultur-Portal (Ostwestfalen-Lippe Culture Portal) is intended to bundle the region's cultural offerings and make them more visible and usable in the future, as well as to set up as many interfaces as possible to existing systems and other services. It addresses cultural providers, cultural mediators and users of cultural services and makes it possible to find suitable cultural offers by means of individualised filter options, improve the networking of cultural actors across different areas, strengthen the visibility of voluntary work and associations, overcome regional borders and - especially for rural areas - guarantee mobility in order to enable cultural participation for all. Cultural operators benefit from the OWL.Kultur-Portal, as it also provides support services for the organisation of cultural events and projects. This intelligent, target-group-specific and user-oriented assistance system can thus contribute to establishing OWL as a cultural brand by making the cultural public more aware of the OWL cultural region as a whole.

Further Information: https://www.sicp.de/en/projekte/owlkultur-portal/

The project t.evo targets the identification of suitable indicators for diachronic change of text patterns based on two highly relevant genres in recent language history. The study will be performed using corpus-based methods and with the help of digital resources and tools for the analysis and annotation of corpora which were provided by different projects for re-use (e.g. German Text Archive, WebLicht, Catma).

In t.evo, we plan to systematically investigate patterns of the structural, functional, social, and stylistic dimensions of texts. Since patterns associated with these dimensions can mostly be acquired from the textual surface, we can draw on the results of conventional computational linguistic analysis tools. In addition, we will apply manual annotation wherever features cannot yet be reliably extracted with automatic methods. All features extracted will undergo statistical analysis and the results will then be verified and interpreted based on the actual text sources.

Analyses will be based on digital corpora of newspapers on the one hand, and devotional literature on the other hand, thus including two genres which had a firmly established place in everyday life for a wide range of recipients in their time. The variety of text types considered is expected to lead to far-reaching results regarding the manifestations of diachronic change of text patterns in general, thus ensuring the applicability of techniques developed by the project to analysis of further text types.

Further Information:

https://www.uni-paderborn.de/en/research-projects/tevo/

Ein gutes kulturelles Angebot ist nicht der alleinige Garant für den Erfolg einer Kultureinrichtung. Jeden Tag müssen viele Entscheidungen getroffen werden, welche die Besucherzufriedenheit, aber auch die wirtschaftliche Situation des Betriebes betreffen. Meistens passiert dies auf persönlichen Erfahrungen. Mit dem Projekt TheaterLytics soll erstmals ein Entscheidungsunterstützungssystem für das datenbasierte Erlösmanagement und die Angebotsgestaltung entwickelt werden.

Weitere Informationen: https://wiwi.uni-paderborn.de/dep3/winfo2/forschung/projekte/theaterlytics

The WeGA is an initiative sponsored by the Academy of Sciences and Literature with the goal to present all of Weber's compositions, letters, diaries and writings in a critical scientific edition by the 200th anniversary of his death in 2026. The edition will consist of approx. 50 volumes of musical scores including critical reports, 10 volumes of letters, approx. 8 volumes of diaries, 2 volumes of writings, a catalogue of works as well as several document volumes. All parts of the text (i.e. excluding the musical notes) will initially be published as digital editions. It will be prepared under the direction of Prof. Dr. Gerhard Allroggen at two research sites: at the Staatsbibliothek zu Berlin - Preußischer Kulturbesitz and at the Musicology Seminar Detmold/Paderborn. The Weber-Studies series will be published parallel to the edition.

Further Information: https://www.weber-gesamtausgabe.de/en/Index

Das interdisziplinäre Verbundprojekt „Wesersandstein als globales Kulturgut - Innovation in der Bauwirtschaft und deren weltweite Verbreitung in vorindustrieller Zeit (16.-19. Jahrhundert)" wurde von 2014-2016 vom Bundesministerium für Bildung und Forschung gefördert. Unter der Leitung von Frau Prof. Dr. Eva-Maria Seng (Universität Paderborn), Herrn Prof. Dr. Frank Göttmann (Universität Paderborn), Dipl.-Ing. Marc Grellert (TU Darmstadt), Dr. Dipl.-Ing. Mieke Pfarr-Harfst (TU Darmstadt) und Prof. Dr. Reinhard Keil (Universität Paderborn) untersuchte ein Team aus Kunst- und Wirtschaftshistorikern, Architekten und Informatikern an Hand von Beispielobjekten wie die Leidener Rathausfassade oder der Bremer Börse die Präfabrikation von Bauten, den transregionalen Export und den damit verbundenen Kulturtransfer. Dabei ging es um die Beantwortung von materiell-technischen, handels- und betriebswirtschaftlichen sowie kunsthistorischen Fragen im Zusammenhang mit der Verbreitung von Sandstein aus dem Oberweser- und Vechtegebiet (Nordwestdeutschland). Beide Sandsteinvorkommen wurden im Projekt unter dem Arbeitsbegriff „Wesersandstein“ zusammengefasst.

Seit Anfang Dezember 2017 stehen sowohl der interessierten Öffentlichkeit wie der Fachwissenschaft wesentliche Forschungsergebnisse des BMBF-Verbundprojektes WeSa zur Verfügung. In der eigens an der Universität Paderborn entwickelten Datenbank (OMEKA-Basis) finden Sie einen Datenpool mit rund 12.000 Einträgen vor. Zahlreiche Detailinformationen dokumentieren nicht allein die neuesten Erkenntnisse über die vorindustrielle Präfabrikation von Architekturbauteilen, Bauwerken sowie der wirtschaftlichen Infrastruktur des internationalen Sandsteinhandels in Nordwesteuropa.

Weitere Informationen: www.upb.de/wesa

ZenMEM emerged from a 2014-2020 BMBF-funded collaborative project in which researchers from the University of Paderborn, the Detmold University of Music and the Ostwestfalen-Lippe University of Applied Sciences worked together in the fields of digital musicology, music and film informatics, media studies, media technologies and in several areas of computer science. In the cooperation project, the University of Paderborn, the Detmold University of Music and the Ostwestfalen-Lippe University of Applied Sciences set themselves the goal of establishing a centre of excellence at the interface of computer science and the humanities.

ZenMEM is a decidedly open association of researchers at the University of Paderborn and strives to jointly propagate and establish new, digitally supported research possibilities in the field of cultural studies and to connect to international developments.

All projects carried out by ZenMEM and ViFE in Detmold/Paderborn in the context of the Digital Humanities are related to each other via (at least) one of ZenMEM's core topics: digital (music) editions in the broadest sense, the coding standards of the MEI and the TEI, or research data management around music notation, metadata, image, audio and video data and texts with musical reference. Through a close exchange between the projects, ZenMEM and ViFE promote the coordinated further development of coding standards, the methodological and theoretical further development of digital editions and the coordination and standardisation of research data management in cooperation with memory institutions.

Further information: https://zenmem.de